We have gone through the terms Graphics Card, CPU, IGPU, TPU, and APU at least once in our lives. However, we never got around to gather any concrete information about them. Given the rate at which technology progresses, it can be difficult to wrap our heads around these abbreviations. However, these abbreviations makes much more sense once we have a detailed information about what they stand for. In this article, we are going to discuss APU, CPU, GPU, TPU, and IGPU in detail.

APU vs CPU vs GPU vs TPU vs IGPU – Comparison

| APU | CPU | GPU | TPU | IGPU |

|---|---|---|---|---|

| Specialised For Parallel Computation | Multipurpose | Specialised For Parallel Computation | Specialised In Matrix Processing | Multipurpose |

| High Latency | Low Latency | High latency | High Latency | Low Latency |

| High Throughput | Low Throughput | Very High Throughput | Very High Throughput | Low Throughput |

| High Compute Density | Low Compute Density | High Compute Density | High Compute Density | Low Compute Density |

1. Central Processing Unit (CPU)

CPU is like the brain and heart of the computer. It knows every single function and executes it at the request of some other computer components.

The CPU performs these three basic steps to process and decode instructions:

- Fetch – During this process, the CPU checks the program counter to know which set of instructions to run next. The program counter will then give the CPU an address value in the memory where the instruction is located, then fetches it.

- Decode – After fetching the instruction, the CPU will now decode this set of instructions. All executable programs are translated to assembly instructions. The assembly code is then decoded into binary instructions.

- Execute – After decrypting the instructions, the CPU will then execute these instructions. It can then do calculations with its arithmetical logical unit (ALU), which is responsible for all mathematical and logical operations. During this stage, it can also move data from one memory location to another or jump to a different address.

- Repeat Cycle – Once everything is completed, the processor goes back to the program counter to find the next set of instructions to run. This cycle is repeated if a further set of instructions is in the queue for decoding and execution.

The number of operations the CPU can perform is measured in Hertz (Hz), which one Hertz is the speed it takes to perform a single operation in one second. However, computer speed is usually measured in Gigahertz (GHz), which is the speed it takes the CPU to perform one million simple tasks per second. This speed rating is also referred to as “clock speed.”

Although higher clock speed may mean faster speed in older times, it is no longer the case for modern CPUs. Instead, advances in technology have made these chips efficient, so they perform more with less. For example, a 3GHz Intel Core i5 will not be faster than an Intel Core i7 running at 2.80GHz.

Discounting the clock speed, there are many more factors that influences CPU’s performance such as CPU architecture, cache memory, world length (bandwidth), multiple cores and bus speed.

2. Graphics Processing Unit (GPU)

Older computers don’t have a GPU (at least not the same as we have now). It’s because these old computers don’t have many graphics to render into a display.

The first use of a graphics processing chip can be traced back to the 1970s with arcade game boards. RAM for frame buffers was expensive at the time, and the best possible solution for arcade game manufacturers is to use video chips to composite data together. At the same time, the display is being scanned out on the monitor.

The ARTC HD63484, the first CMOS graphics processor for PC, was released by Hitachi in 1984. It can display up to 4K resolution in monochrome mode and was used in many graphics cards throughout the 1980s. Since then, many manufacturers have started creating their versions of the graphics processor.

It wasn’t until 1994 that we heard the term “GPU,” which was used by Sony to describe the graphic processing chip inside its PlayStation console. However, NVidia popularized the term, calling their GeForce 256 “the world’s first GPU.” The first single-chip processor integrated with transform, lighting, triangle setup/clipping, and rendering engines.

Basically, GPU works by the signal of the CPU to render the sets of instructions it has decoded. To put it simply, the CPU knows what will happen if the car struck a tree, and it is for GPU to render what came out of that incident. The GPU generally performs fast mathematical calculations to free the CPU when performing other tasks.

3. Integrated Graphics Processing Unit (IGPU)

Unlike the GPU, the Integrated Graphics Processing Unit (IGPU) is a GPU that is preinstalled into a computer’s processor and doesn’t have a separate memory bank for graphics/video. While IGPU uses the system memory, it generally utilizes less power, ultimately creating less heat and providing a longer battery life.

Intel’s Rocket Lake CPUs are amongst the latest generation of processors to implement Integrated Graphics (Iris XE). Computer’s that come with an IGPU are prone to numerous benefits. They’re usually compact, energy-efficient, and less expensive than the ones with a GPU. Your IGPU shares its memory with the main system memory.

Majority of the modern-day processors have an IGPU. Even with a system with a dedicated graphics slot, the system automatically switches between the two for better efficiency. However, IGPU is primarily used in compact-sized devices such as laptops, tablets, smartphones, and budget desktop computers. While IGPU still struggles in the majority of the areas, they can be handy in casual gaming and 4k video-watching

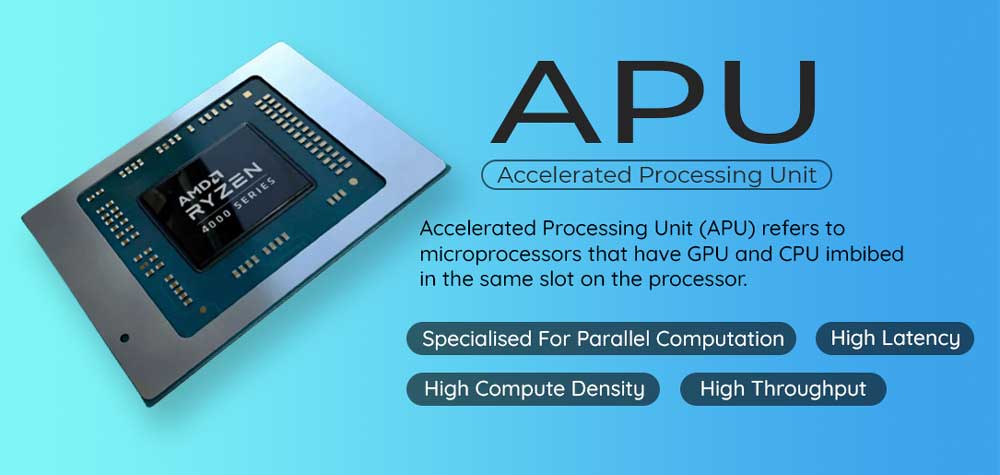

4. AMD Accelerated Processing Unit (APU)

Introduced by AMD, an AMD Accelerated Processing Unit (APU) refers to microprocessors that have GPU and CPU imbibed in the same slot on the processor. These 65-bit microprocessors feature onboard integrated graphics. These offer the best of both worlds by combining CPU and GPU under a single bracket. Using its GPU architecture, AMD was able to create a better visual experience than Intel’s integrated chips.

These AMD APU are HSA (Heterogeneous System Architecture) Compliant which allows for an integration of CPU and GPU on the same system bus with shared memory and tasks. As the GPU and CPU are imbibed on the same die, these AMD APUs generally have resources such as RAM that allows them to be highly effective. Another significant advantages that come with using an AMD APU are that there is a noticeable increase in power efficiency, performance speed, and a sizeable decrease in manufacturing costs.

Although APUs offer fairly limited improvements in terms of performance when compared to a CPU and GPU, they still have an overclocking potential similar to their counterparts.

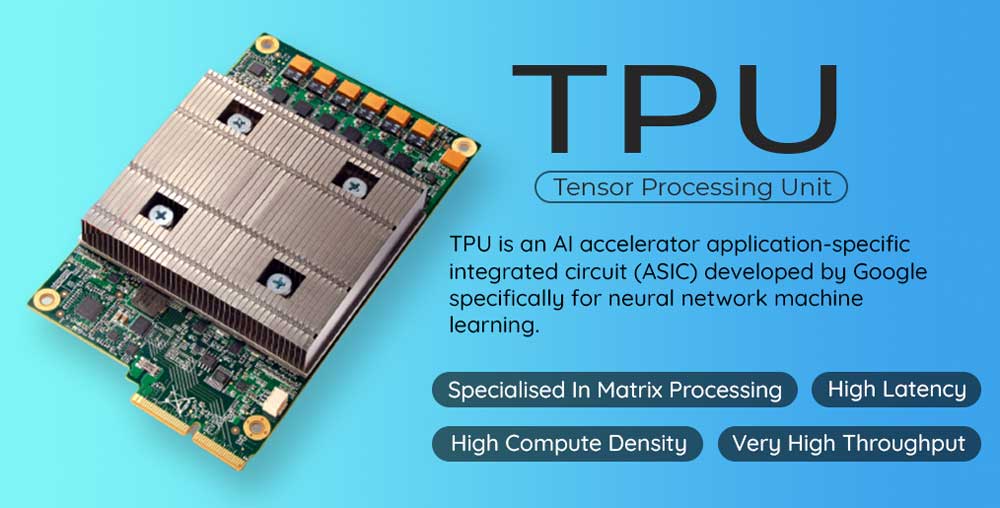

5. Tensor Processing Unit (TPU)

A Tensor processing unit (TPU) is an AI accelerator application-specific integrated circuit (ASIC) developed by Google specifically for neural network machine learning. The tensor processing unit was announced in 2016 at Google I/O. At that time, the TPU was a chip that was already under process in their data center and was being designed explicitly to handle Google’s Tensor Flow framework.

With machine learning taking a huge leap over the years, the increased demand for specialized computer resources led to the development of TPUs. Designed primarily for neutral machine learning, these Google Cloud TPUs were found to be 3 times faster than CPUs while 3 times slower than an average GPU. These TPUs are primarily used by the system to perform logistics, handle fast calculations, and the input/output number of a computer. These custom-built processors have proven to be almost 2.5 times faster than the earlier batch of TPUs.

FAQs

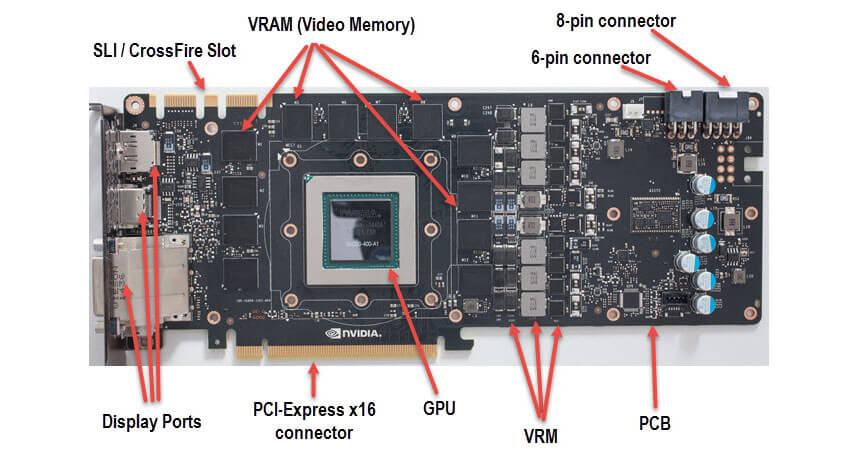

1. Are GPU and Graphics Card the same?

Well, sort of. A graphics card will not allow work without a GPU. If the CPU is the brain of the whole computer, the GPU is the brain of the graphics card. This is where things get a little different. There are two types of graphic processors. A graphic processor could either be integrated or dedicated graphics (also referred to as discrete).

An integrated graphics is an onboard graphics that is soldered onto the motherboard or CPU. It uses a portion of a computer’s system RAM instead of having its own dedicated memory. Integrated graphics are always less powerful than dedicated graphics but are also power efficient.

Since it is built onto the motherboard (or CPU) itself, it can never be removed for an upgrade. However, some computers allow BIOS to disable the integrated chip to better graphics processing performance from a dedicated graphics processor. This dedicated graphics processor is called a graphics card or video card.

Note that whilst both the integrated and dedicated graphics processor can be called a graphics card, today, however, when people say graphics card, it refers to a dedicated graphics processing system.

While they work the same as integrated graphics, a graphics card is more powerful as it has its own hardware. A graphics card is a complete board that has several components. It has its graphics processing unit (GPU), random access memory (RAM), and digital to analog converter (DAC).

To sum up, the GPU is a dedicated graphics processor inside the graphics card. In modern terms, a graphics card is a graphics processing system that is not built into a motherboard and has its hardware (dedicated or discrete graphics). However, integrated graphics can also be referred to as a graphics card. Other sources may also refer to the graphics card as a video card.

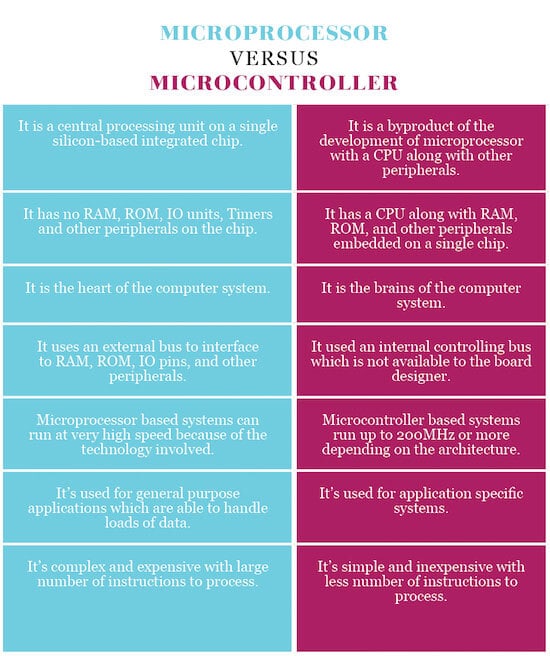

2. Do System-on-Chip (SoC) and CPU work the same?

On computers, we have a CPU and GPU. On smartphones, on the other hand, we have what we call as System-on-Chip (SoC). A CPU is referred to as a “microprocessor,” while the SoC is sometimes referred to as a “microcontroller.”

A CPU is the heart and brain of the SoC. An SoC is an integrated circuit that has all the components to make the system run. An SoC has its own integrated RAM, ROM, GPU, CPU, and many other tiny processors that makes our smartphones and tablets work.

A modern SoC like the Qualcomm Snapdragon 855, for example, has integrated cellular modems into the chip, as well as a digital signal processor (DSP), image signal processor (ISP), an artificial intelligence engine (AIE), a Wi-Fi chip, a Bluetooth chip, NFC, GPS, and all sorts of sensors. This, alongside the CPU, and GPU makes up the SoC. Nonetheless, both work the same.

3. How do CPU and GPU operate?

Both the CPU and GPU are very important components of our computer. While they differ in most operations, both can’t work without the other.

Let’s put it this way. When we are playing Assassin’s Creed on a computer, every time we move our character, execute an amazing parkour skills, or kill the enemies, it is the CPU that calculates these movements as it is the one that has the understanding of the physics. However, to render these images, the CPU needs help from the GPU as it is the one that can understand and translate these instructions to images.

CPU vs GPU Example

In other words, the CPU is responsible for processing the instructions and inputs from the players and tells the GPU to render this instruction to images. Better CPU means faster operational cycles, and better GPU means swift render times, which translates to higher frame rates when gaming.

In terms of computational power, the GPU can only do a fraction of what the CPU is capable of doing. The CPU is more flexible as it has a larger instruction set than the GPU despite only having four cores at most. The CPU can also run at faster clock speeds.

While the GPU can be limited in its operation, it does the work at incredible speed. The GPU will use hundreds of cores to make time-sensitive calculations for thousands of pixels at a time, making it possible to display complex 3D graphics.

NVidia’s GTX 2080, for example, features 2944 shading cores. That means, it can perform 2944 set of operations per clock cycle. In comparison, a CPU like the Intel Core i5 and Intel Core i7 can only execute four simultaneous instructions in one clock cycle, while budget Intel Core i3 can only operate two simultaneous instructions per one clock cycle. This is one of the reason why a good GPU is used for a cryptocurrency mining.

The Bottom Line

Getting up to speed with modern-day abbreviations is challenging. This is precisely why we hope our article was of help in informing you about everything that APU, CPU, GPU, TPU, and IGPU stand for. When buying a setup for work or building a full-fledged gaming rig, we ought to pay a great deal of attention to our system’s motherboard, graphics, and especially the processor. It is vital that you possess information about these processing units if you are about to buy or build a new gaming rig.

Chip memory it means when the processor needs some data, instead of fetching it from main memory it

fetches it from some component that is installed on processor itself. The data that a processor

frequently needs is prefetched from memory to SRAM to decrease the delay or the latency. SRAM is

used as on chip storage; its latency rate is less usually single cycle access time and it is

considered as a cache for DRAM.

Simple Linear Model? As we know that, concept

of artificial perceptron’s and neurons were

based on the system of human brain. It work

same as the human neural this idea actually

established when back in 50s scientists

observes the human brain