Featured

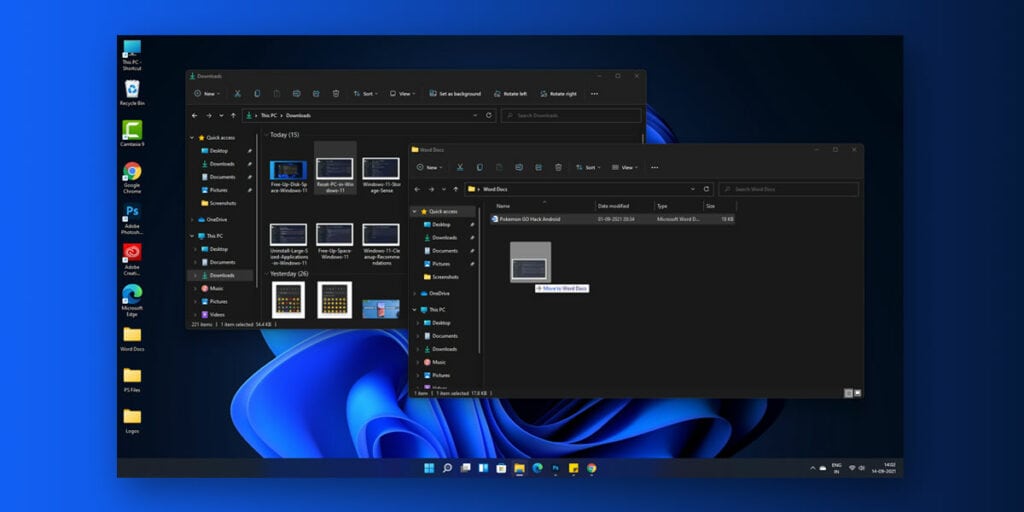

People with Windows 10 and compatible hardware have already received their respective Windows 11 updates. Although, users have received a few concerns upon updating particularly regarding the drag-and-drop feature that was present in Windows 10 but isn’t in Windows 11.

![Fix: Android 13 VPN Issues [8 Fixes]](https://devsjournal.com/wp-content/uploads/2023/07/Android-13-VPN-not-working-300x150.jpg)